- UCR researchers retrain AI models to keep safety intact when trimmed for smaller devices

- Changing exit layers removes protections, retraining restores blocked unsafe responses

- Study using LLaVA 1.5 showed reduced models refused dangerous prompts after training

Researchers at the University of California, Riverside are addressing the problem of weakened safety in open-source artificial intelligence models when adapted for smaller devices.

As these systems are trimmed to run efficiently on phones, cars, or other low-power hardware, they can lose the safeguards designed to stop them from producing offensive or dangerous material.

The UCR team examined what happens when a model’s exit layer is changed from its default position.

Weakened safety guardrails

Their results, presented at the International Conference on Machine Learning in Vancouver, Canada, showed that safety guardrails weaken once the exit point is moved, even if the original model had been trained not to provide harmful information.

The reason models are adjusted in this way is simple. Exiting earlier makes inference faster and more efficient, since the system skips layers. But those skipped layers may have been critical to filtering unsafe requests.

“Some of the skipped layers turn out to be essential for preventing unsafe outputs,” said Amit Roy-Chowdhury, professor of electrical and computer engineering and senior author of the study. “If you leave them out, the model may start answering questions it shouldn’t.”

To solve this, the researchers retrained the model’s internal structure so that it retains the ability to identify and block unsafe material, even when trimmed.

This approach does not involve external filters or software patches, but changes how the model interprets dangerous inputs.

“Our goal was to make sure the model doesn’t forget how to behave safely when it’s been slimmed down,” said Saketh Bachu, UCR graduate student and co-lead author of the study.

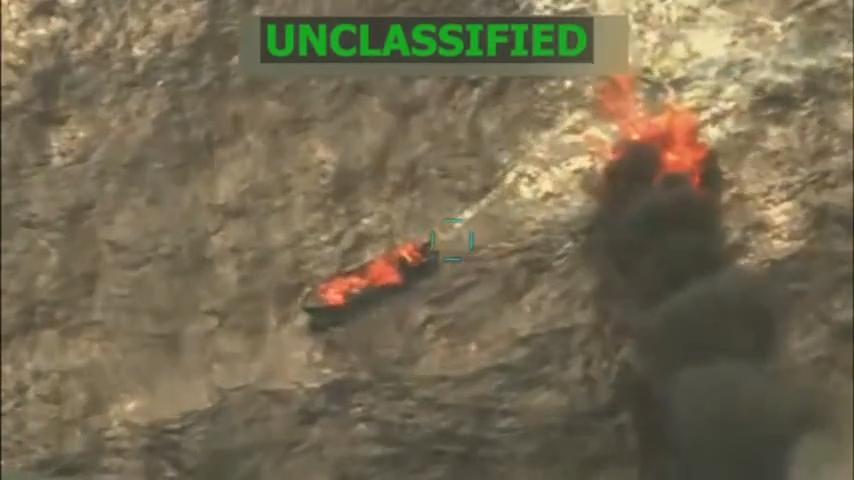

The team tested their method on LLaVA 1.5, a vision language model.

When its exit layer was moved earlier than intended, the system responded to harmful prompts, including detailed bomb-making instructions.

After retraining, the reduced model consistently refused to provide unsafe answers.

“This isn’t about adding filters or external guardrails,” Bachu said.

“We’re changing the model’s internal understanding, so it’s on good behavior by default, even when it’s been modified.”

Bachu and co-lead author Erfan Shayegani called the work “benevolent hacking,” a way to strengthen models before vulnerabilities are exploited.

“There’s still more work to do,” Roy-Chowdhury said. “But this is a concrete step toward developing AI in a way that’s both open and responsible.”